Testing Text Messaging as a Complement to Door-to-Door Canvassing

In the fall of 2025, the Center for Campaign Innovation conducted a randomized voter turnout experiment during the Virginia gubernatorial election. The experiment was designed to answer a practical question that many campaigns face but few have rigorously tested: does adding text messages to a door-to-door canvassing operation improve voter turnout, and if so, when and how?

Canvassing remains one of the most effective voter contact methods available, but it is also one of the most expensive. Text messaging, by contrast, costs almost nothing to deliver. If a small investment in texting can boost turnout or lower the cost per vote, the implications for competitive races are significant. But if texts add noise without value, campaigns risk wasting time on coordination for no measurable benefit.

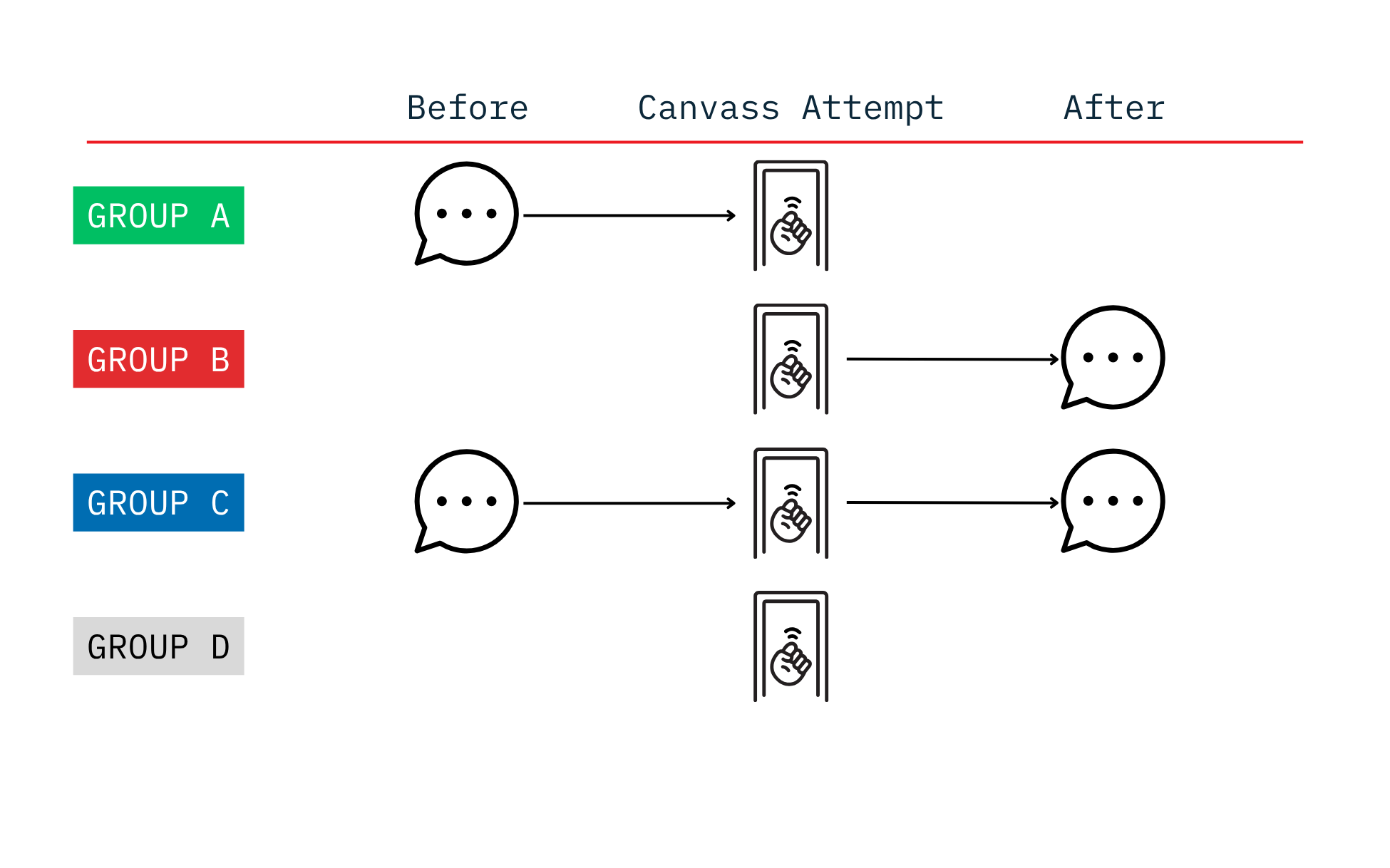

Across two phases of the experiment, voters were randomly assigned into one of four groups.

- Group A received a text message before a canvass attempt.

- Group B received a text message after a canvass attempt.

- Group C received a text message before AND after a canvass attempt.

- Group D served as the control and only received a canvass attempt.

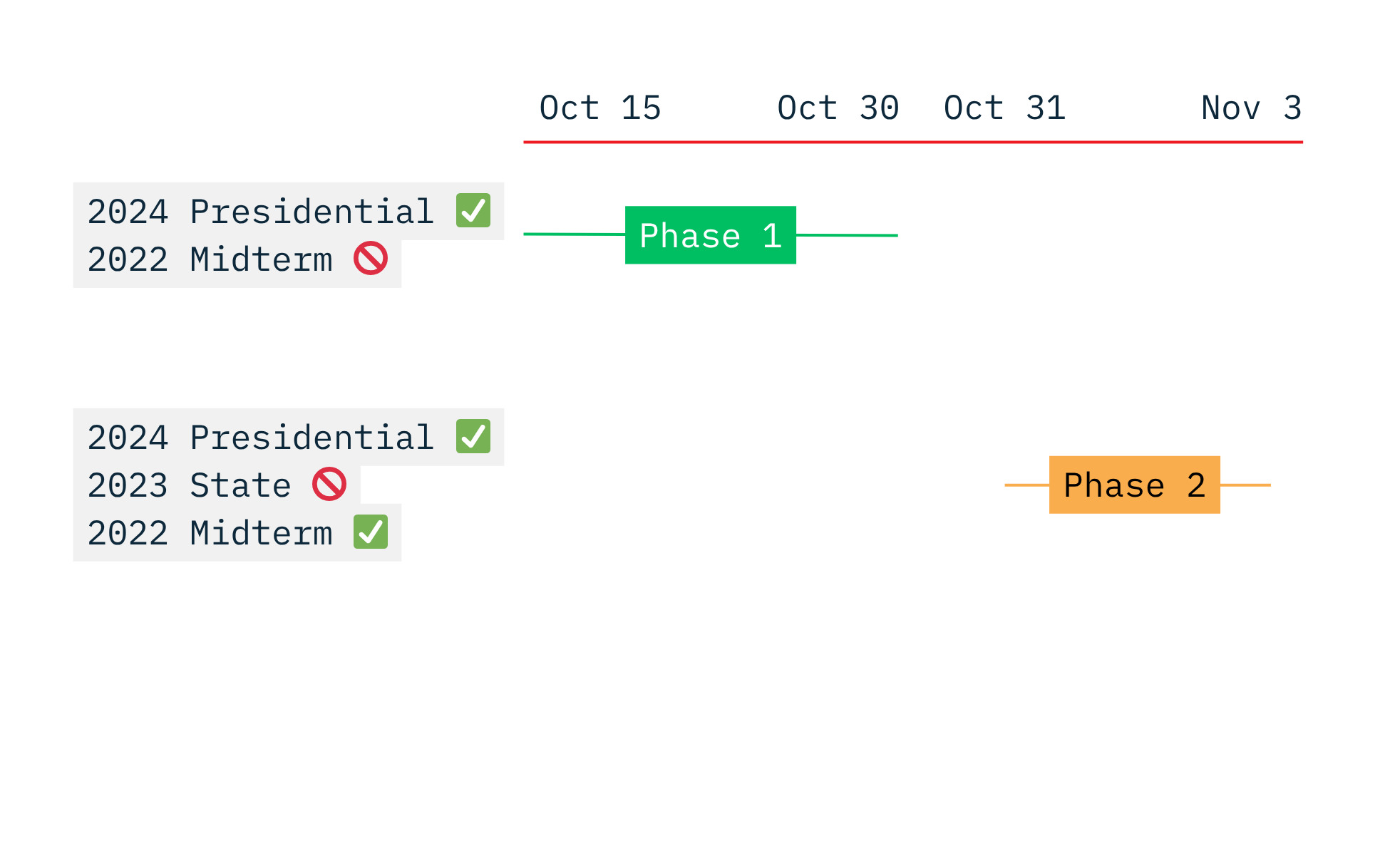

In Phase 1, we targeted infrequent conservative voters who had cast ballots in the 2024 presidential election but showed no vote history in the 2022 midterms. In Phase 2, we targeted more frequent conservative voters who cast ballots in both 2024 and 2022, but without voting in the 2023 state elections.

The topline results show modest overall movement, with post-canvass texting producing the strongest pooled performance. The clearest and most durable gains appear among women, where both the pre-text and post-text treatments lift turnout by about four points over the control in pooled results. At the same time, texting does not appear to improve canvassing performance itself. The most plausible interpretation is that any effect operates after exposure, through reinforcement, reminder, or salience effects outside the door interaction.

Our Approach

This experiment did not test whether canvassing works. It tested whether adding text messages around a canvass attempt changes the effectiveness of a canvass program that is already in motion.

Every voter in the study received a single canvass attempt. It means the control condition is canvass only, not no contact. It also means the post-canvass text in Group B cannot influence whether the door was answered on the attempt itself. The text could still influence turnout afterward. It could not cause a higher completion rate at the moment of contact.

The four treatment groups were designed to isolate timing effects. The per-voter cost structure was simple and stable across groups. As a result, differences in cost-effectiveness are driven primarily by turnout, not by large differences in spending.

We evaluated the experiment in three ways. First, we looked at turnout, which is the central outcome. Second, we looked at canvass response metrics, including completion rate, not home rate, and refusal rate, to test whether texting changed field performance at the door. Third, we translated the turnout results into cost terms, including cost per vote and cost per incremental vote, because campaigns make decisions in budgets as much as percentages.

Because this test was run in a real campaign setting where we expect only small effects, it’s important to look at the results in two ways. Looking at each phase separately helps us see whether the pattern holds up or changes over time. Combining both phases gives us the clearest picture of how big the overall impact actually is.

Results

Turnout

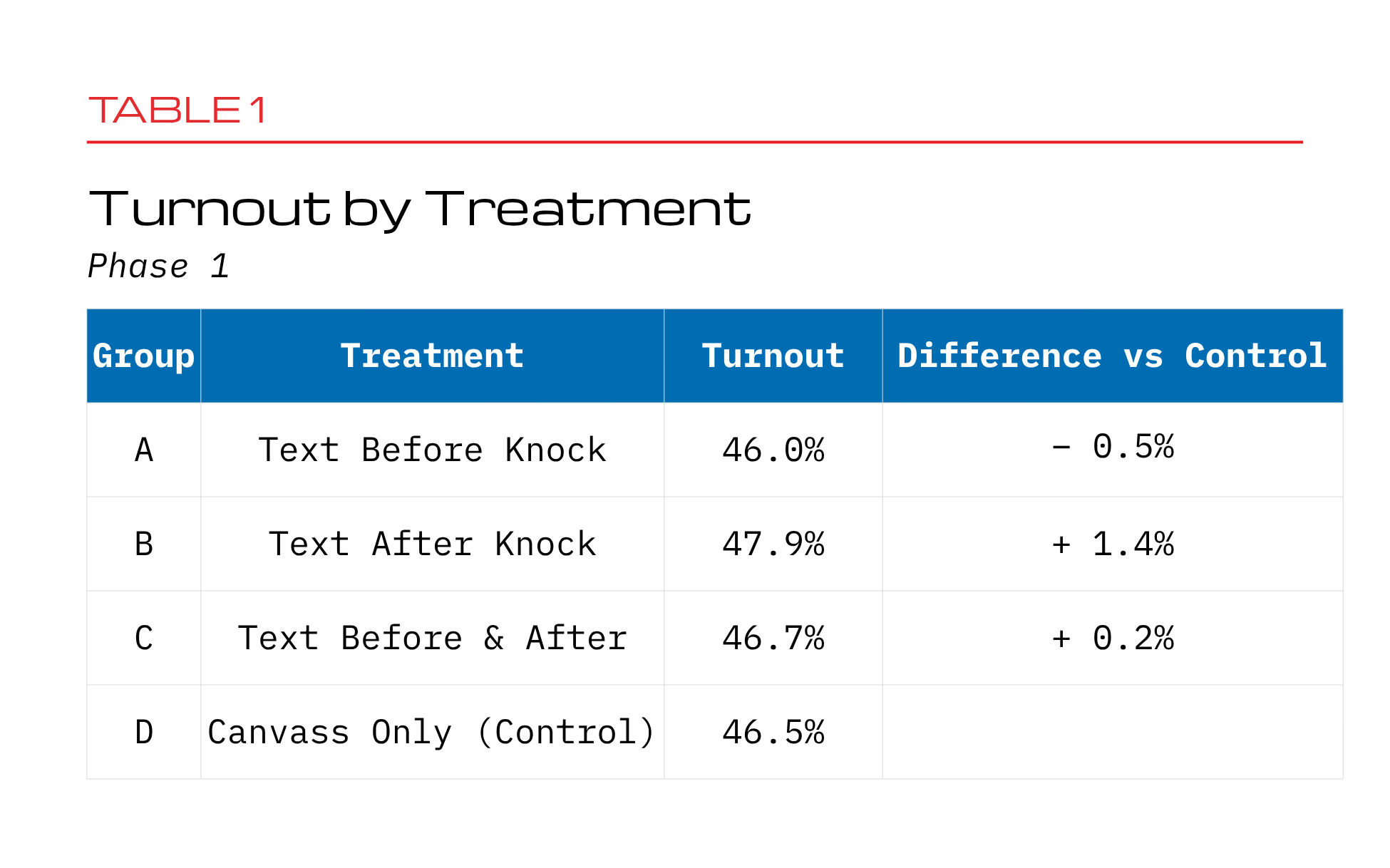

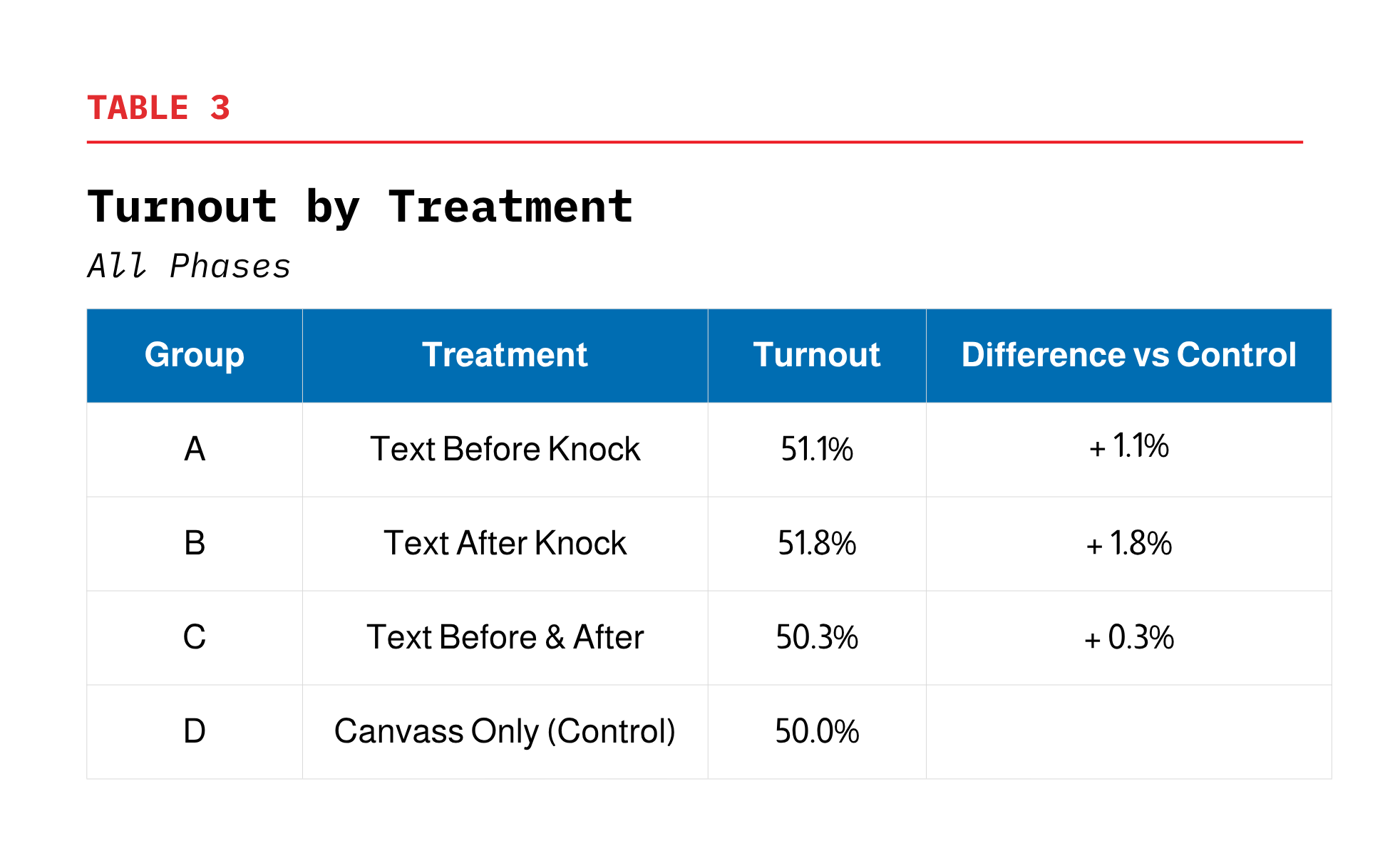

Turnout differences across the groups were generally small, though texting after the canvass attempt (Group B) performed best when looking at the full set of results.

In Phase 1, turnout in the canvass-only control group (D) was 46.5%. Group A was slightly lower at 46.0%, while Groups B and C were slightly higher at 47.9% and 46.7%. That means Group B had the strongest result in this phase, improving turnout by about 1.4 points, while the other groups were very close to the baseline.

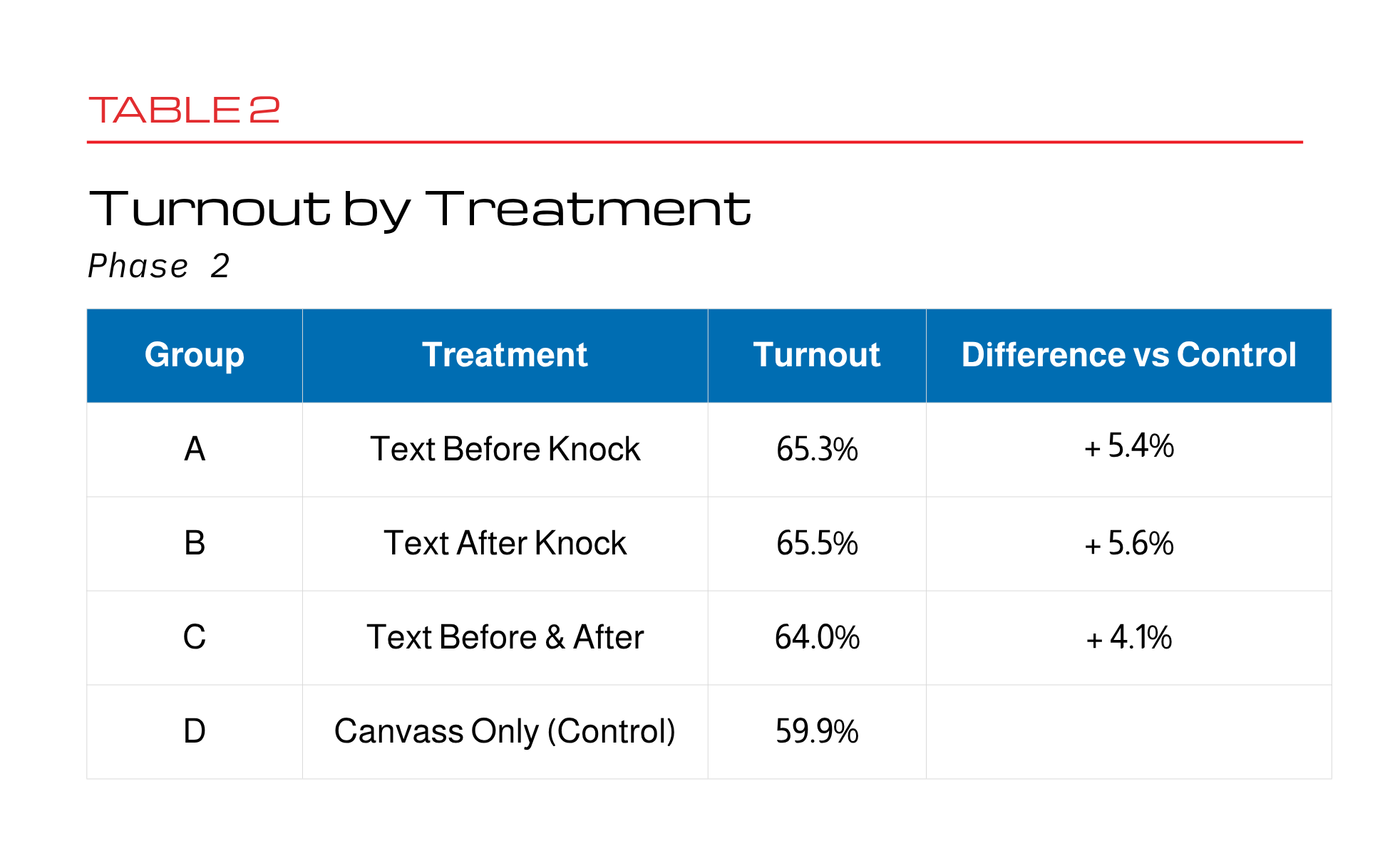

In Phase 2, turnout was higher overall and the differences between groups were larger. The control group turned out at 59.9%, while Groups A, B, and C ranged from 64.0% to 65.5%. All three treatments outperformed the control, with Groups A and B showing the largest gains.

When we combine both phases, the results settle into a more consistent pattern. Turnout is 50.0% in the control group, compared to 51.1% in Group A, 51.8% in Group B, and 50.3% in Group C. These differences are still small and not strong enough to draw firm statistical conclusions, but the overall pattern is clear. Group B performs best, while Group C, which adds an extra text, does not improve on the simpler approaches.

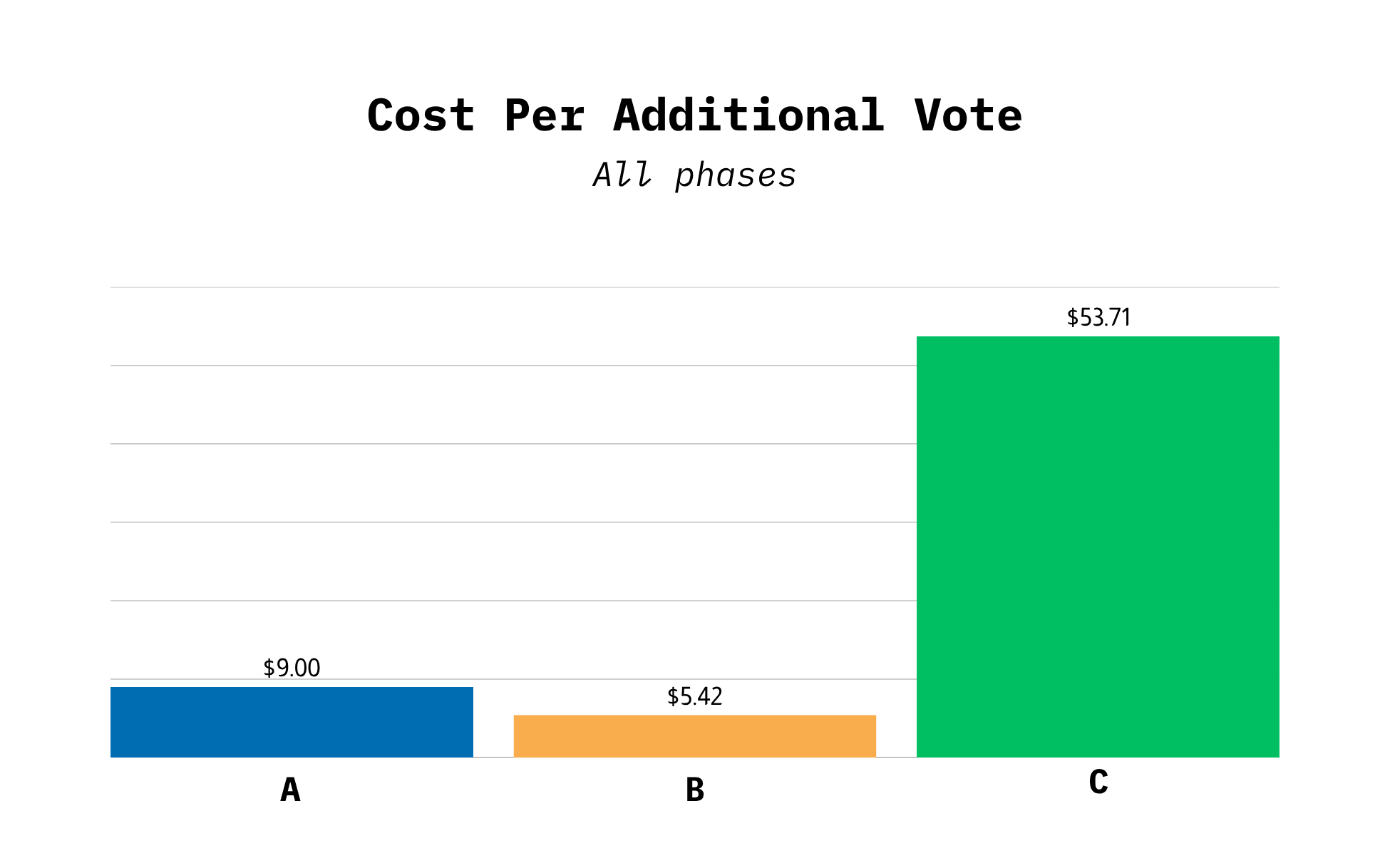

Cost

Because the costs in this test were straightforward, it’s easier to compare how efficient each approach was. For the purposes of this analysis we estimate a canvass attempt cost of $5.00 and a text message cost of $0.10. Looking at both phases together, the estimated cost per additional vote was $9.00 for Group A, $5.42 for Group B, and $53.71 for Group C.

The overall pattern is clear. Group B, the post-canvass text, is the most cost-effective option. Group A is slightly less efficient but still reasonable. Group C, which adds a second text, performs much worse. It costs more but doesn’t produce enough extra votes to justify the added expense.

If campaigns want to add texting to a canvassing program, the simpler options work better. In particular, sending a single text after the canvass attempt provides the best balance of cost and results.

Gender

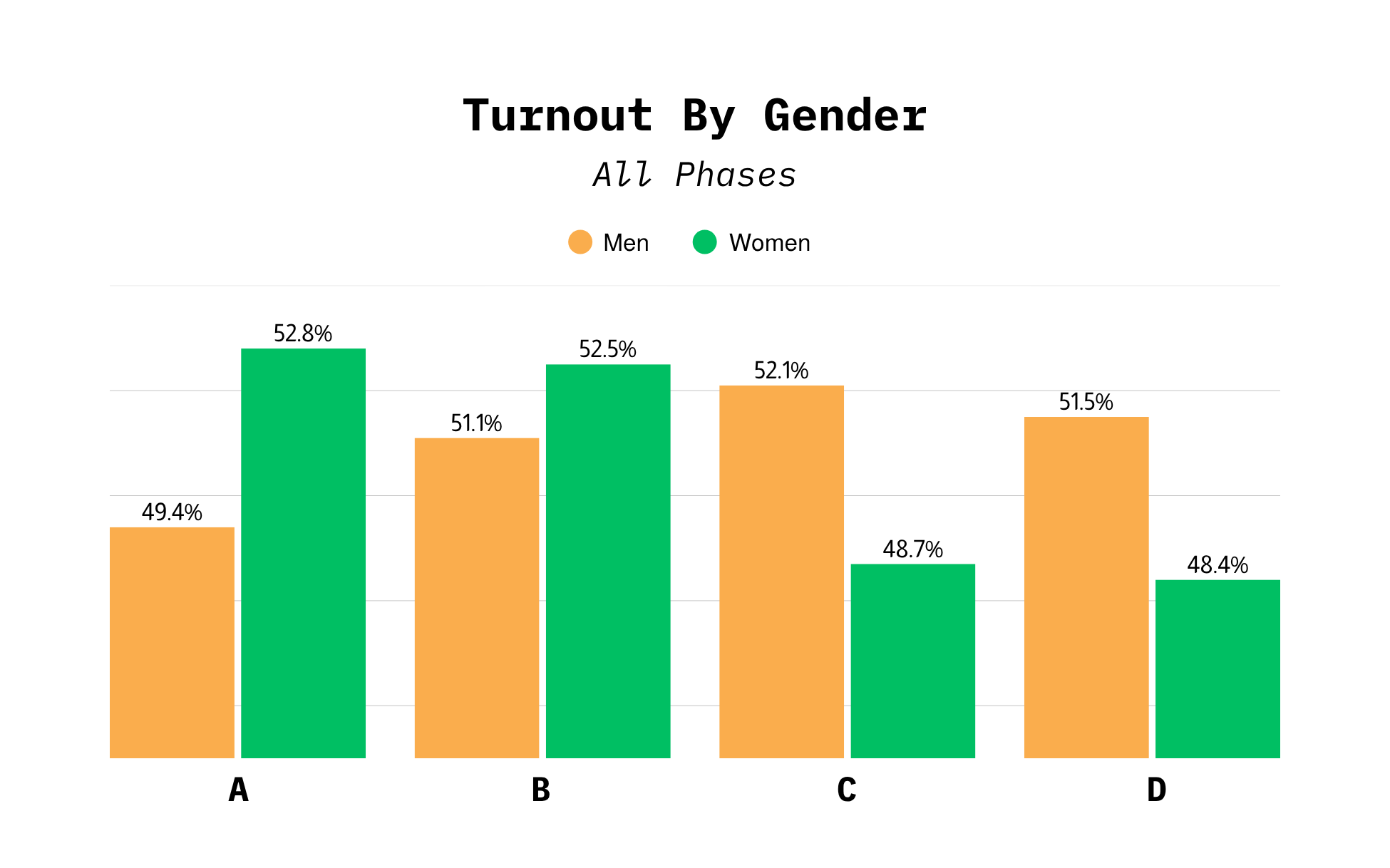

Looking at the results by gender changes the story in an important way. Among women, both the pre-text (Group A) and post-text (Group B) treatments increased turnout by about 4 points compared to the control group. That’s a meaningful difference and shows up across both phases of the experiment. The combined approach (Group C), which used two texts, did not produce the same benefit.

Men, however, show a very different pattern. Turnout rates are almost the same across all groups, with no clear improvement from texting. In other words, the treatments that helped with women did not have the same effect on men.

This matters for how campaigns use these tactics. If you apply this approach to everyone, the gains among women get diluted by the lack of movement among men. But if you focus on female voters, you capture most of the benefit. The takeaway is simple: the impact is real, but it’s not evenly distributed, and targeting matters.

Canvassing Response Rates

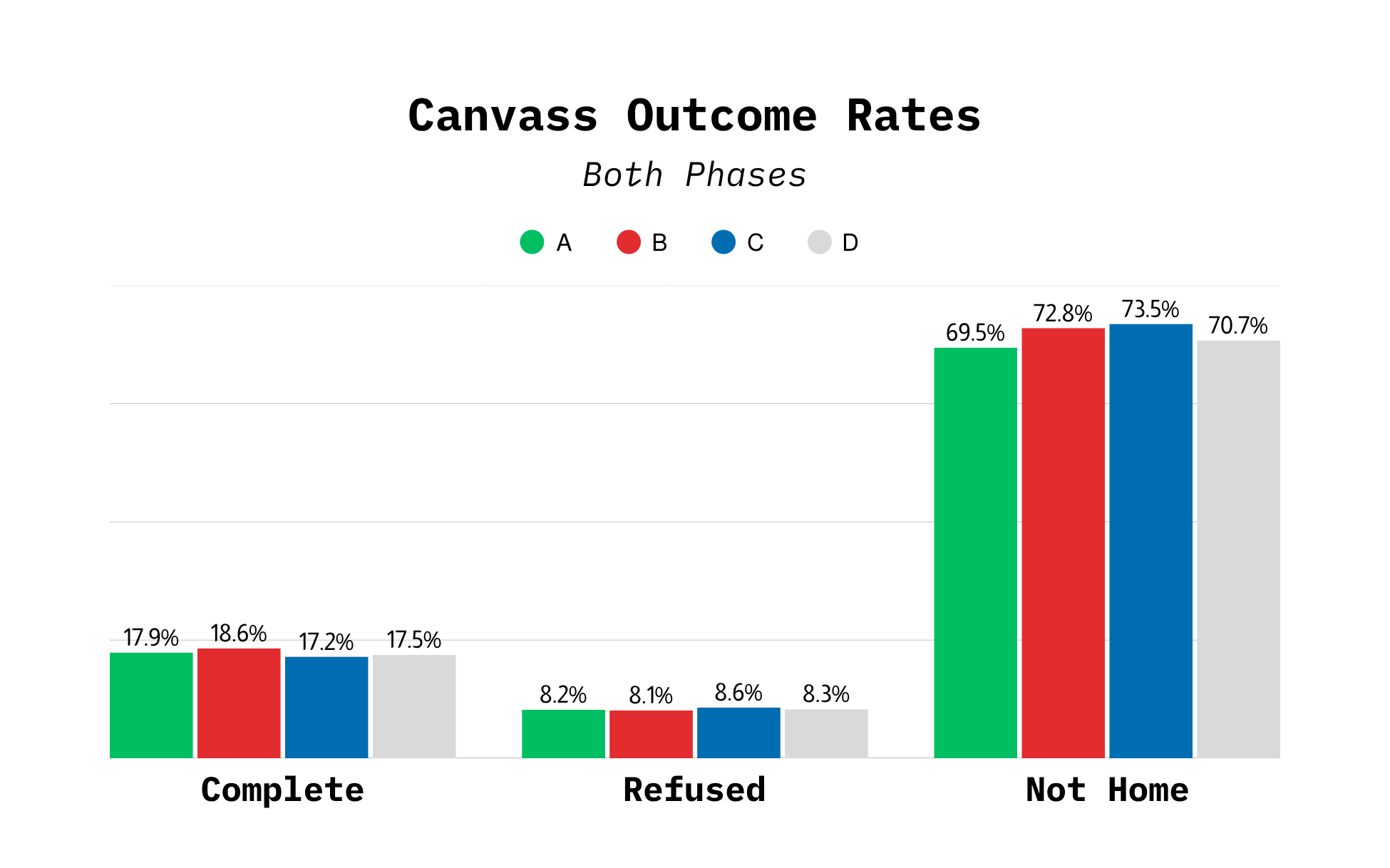

One idea going into this test was that sending a text before a canvass attempt might “prime” voters, making them more likely to answer the door or engage with the canvasser. The data does not show strong evidence of that.

In Phase 1, the key canvassing metrics were almost identical across all groups. Completion rates, refusal rates, and not-home rates were all very close, with no clear improvement from texting. In other words, adding a text did not make it easier to reach voters or get them to engage at the door.

In Phase 2, there was more variation in these metrics, including higher completion rates in Group B and weaker results in Group C. But these differences cannot be caused by the texting itself in Group B, since the text was sent after the canvass attempt and each voter only received one knock. That means the text could not have influenced whether someone answered the door in the first place.

The takeaway is that texting does not appear to improve the effectiveness of canvassing itself. Instead, any impact on turnout is likely happening after the interaction, by reinforcing the message, reminding voters, or keeping the campaign top of mind. This helps clarify how campaigns should think about texting: not as a way to improve contact rates, but as a follow-up tool that may influence behavior later.

What This Means

The biggest takeaway from this test is simple: if you’re going to add texting to a canvassing program, sending a text after the canvass attempt is the best place to start. It showed the most consistent results, the best cost efficiency, and didn’t create any operational issues.

The next takeaway is about targeting. The strongest results in this experiment came from women. In the combined data, women who received a text were about 4 points more likely to vote than those who only received a canvass. That’s a meaningful lift. It also appears to be relatively cost-effective, with each additional vote costing just a few dollars in these groups. While that estimate isn’t exact, it clearly points to where campaigns are most likely to see results.

It’s also important not to assume that more outreach automatically means better outcomes. The group that received two texts did not perform better and often performed worse. Adding complexity increases costs, and those costs need to be justified by better results. In this case, they were not.

Finally, campaigns should keep testing. This experiment shows that both timing and audience matter, but neither should be treated as settled. The results suggest that who you target may matter just as much, if not more, than when you send the message.

Limitations

The turnout comparisons in this experiment come from randomized assignment. That means the differences we observe between groups are likely caused by the treatments themselves, not outside factors.

At the same time, the effects we’re looking for are small. Differences of one or two points are hard to detect with certainty, especially in smaller samples. That’s why looking at the combined results across both phases gives the most reliable picture.

The gender findings are especially interesting, but they should be treated as a starting point, not a final answer. The pattern is consistent across both phases, but it would be worth confirming in a larger test before building an entire strategy around it. It’s also worth noting that a small number of voters did not have gender data, so those subgroup totals don’t match the full sample exactly.

The canvassing metrics help explain what’s going on, but they are not perfect. They depend on how canvassers record outcomes, which can vary. Small differences in those numbers should not be overinterpreted.

Conclusion

The main lesson from this experiment is that texting around a canvass attempt can have an effect, but not always in the way campaigns expect. The best-performing approach was sending a text after the canvass attempt. The clearest turnout gains are concentrated among women. Adding extra texts did not improve results and added unnecessary cost.

Just as importantly, texting did not make canvassing more effective at the door. It didn’t increase contact rates or reduce refusals. Instead, its impact appears to come later, by reinforcing the message or keeping the campaign top of mind.

For campaigns, the takeaway is to use post-canvass texting as a low-cost add-on to field programs, especially when targeting female voters. Avoid layering multiple texts without clear evidence of added benefit, and do not expect texting to improve door contact rates. Instead, treat it as a follow-up tool that can modestly increase turnout after the canvass attempt. Additional testing should focus on validating these effects at larger scale and sharpening targeting.In close races, these kinds of small, well-targeted improvements can make a meaningful difference.